[1]:

%matplotlib inline

D:\C\Anaconda3\envs\tfcpu27_py39\lib\site-packages\numpy\_distributor_init.py:30: UserWarning: loaded more than 1 DLL from .libs:

D:\C\Anaconda3\envs\tfcpu27_py39\lib\site-packages\numpy\.libs\libopenblas.EL2C6PLE4ZYW3ECEVIV3OXXGRN2NRFM2.gfortran-win_amd64.dll

D:\C\Anaconda3\envs\tfcpu27_py39\lib\site-packages\numpy\.libs\libopenblas.GK7GX5KEQ4F6UYO3P26ULGBQYHGQO7J4.gfortran-win_amd64.dll

warnings.warn("loaded more than 1 DLL from .libs:"

hyperparameter optimization with Model class for NNs

[ ]:

try:

import ai4water

except (ImportError, ModuleNotFoundError):

!pip install ai4water[hpo]

[1]:

from ai4water.functional import Model

from ai4water.datasets import MtropicsLaos

from ai4water.utils.utils import get_version_info

from ai4water.hyperopt import Categorical, Real, Integer

D:\C\Anaconda3\envs\tfcpu27_py39\lib\site-packages\numpy\_distributor_init.py:30: UserWarning: loaded more than 1 DLL from .libs:

D:\C\Anaconda3\envs\tfcpu27_py39\lib\site-packages\numpy\.libs\libopenblas.EL2C6PLE4ZYW3ECEVIV3OXXGRN2NRFM2.gfortran-win_amd64.dll

D:\C\Anaconda3\envs\tfcpu27_py39\lib\site-packages\numpy\.libs\libopenblas.GK7GX5KEQ4F6UYO3P26ULGBQYHGQO7J4.gfortran-win_amd64.dll

warnings.warn("loaded more than 1 DLL from .libs:"

D:\C\Anaconda3\envs\tfcpu27_py39\lib\site-packages\sklearn\experimental\enable_hist_gradient_boosting.py:16: UserWarning: Since version 1.0, it is not needed to import enable_hist_gradient_boosting anymore. HistGradientBoostingClassifier and HistGradientBoostingRegressor are now stable and can be normally imported from sklearn.ensemble.

warnings.warn(

[2]:

for k,v in get_version_info().items():

print(f"{k} version: {v}")

python version: 3.9.7 | packaged by conda-forge | (default, Sep 29 2021, 19:20:16) [MSC v.1916 64 bit (AMD64)]

os version: nt

ai4water version: 1.06

lightgbm version: 3.3.1

tcn version: 3.4.0

catboost version: 0.26

xgboost version: 1.5.0

easy_mpl version: 0.21.3

SeqMetrics version: 1.3.3

tensorflow version: 2.7.0

keras.api._v2.keras version: 2.7.0

numpy version: 1.21.0

pandas version: 1.3.4

matplotlib version: 3.4.3

h5py version: 3.5.0

sklearn version: 1.0.1

shapefile version: 2.3.0

xarray version: 0.20.1

netCDF4 version: 1.5.7

optuna version: 2.10.1

skopt version: 0.9.0

hyperopt version: 0.2.7

plotly version: 5.3.1

lime version: NotDefined

seaborn version: 0.11.2

[3]:

Not downloading the data since the directory

F:\data\MtropicsLaos already exists.

Use overwrite=True to remove previously saved files and download again

Value based partial slicing on non-monotonic DatetimeIndexes with non-existing keys is deprecated and will raise a KeyError in a future Version.

(258, 9)

[4]:

input_features = data.columns.tolist()[0:-1]

print(input_features)

['air_temp', 'rel_hum', 'wind_speed', 'sol_rad', 'water_level', 'pcp', 'susp_pm', 'Ecoli_source']

[5]:

output_features = data.columns.tolist()[-1:]

print(output_features)

['Ecoli_mpn100']

build the model

[8]:

lookback = 14

model = Model(

model = {"layers": {

"Input": {"shape": (lookback, len(input_features))},

"LSTM": {"units": Integer(10, 30, name="units", num_samples=10),

"activation": Categorical(["relu", "elu", "tanh"], name="activation")},

"Dense": 1

}},

lr=Real(0.00001, 0.01, name="lr", num_samples=10),

batch_size=Categorical([4, 8, 12, 16, 24], name="batch_size"),

epochs=500,

ts_args={"lookback": lookback},

input_features=input_features,

output_features=output_features,

x_transformation="zscore",

y_transformation={"method": "log", "replace_zeros": True, "treat_negatives": True},

val_metric="rmse"

)

building DL model for

regression problem using Model

Model: "model"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

Input (InputLayer) [(None, 14, 8)] 0

LSTM (LSTM) (None, 15) 1440

Dense (Dense) (None, 1) 16

=================================================================

Total params: 1,456

Trainable params: 1,456

Non-trainable params: 0

_________________________________________________________________

[8]:

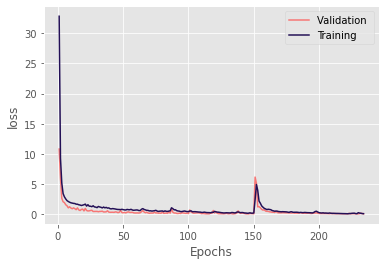

_ = model.fit_on_all_training_data(data=data, verbose=0)

***** Training *****

input_x shape: (136, 14, 8)

target shape: (136, 1)

***** Validation *****

input_x shape: (35, 14, 8)

target shape: (35, 1)

***** Validation *****

input_x shape: (35, 14, 8)

target shape: (35, 1)

********** Successfully loaded weights from weights_134_0.08508.hdf5 file **********

[9]:

model.evaluate_on_training_data(data=data, metrics=['r2', 'nse', 'rmse'])

***** Training *****

input_x shape: (136, 14, 8)

target shape: (136, 1)

5/5 [==============================] - 0s 2ms/step

argument test is deprecated and will be removed in future. Please

use 'predict_on_test_data' method instead.

[9]:

{'r2': 0.9383992599582605,

'nse': 0.9351325536411559,

'rmse': 2076.4358887285684}

[10]:

model.evaluate_on_test_data(data=data, metrics=['r2', 'nse', 'rmse'])

argument test is deprecated and will be removed in future. Please

use 'predict_on_test_data' method instead.

***** Test *****

input_x shape: (74, 14, 8)

target shape: (74, 1)

3/3 [==============================] - 0s 2ms/step

[10]:

{'r2': 0.004700969723135662,

'nse': -0.032661562876391104,

'rmse': 27295.650642547433}

[11]:

model.val_metric

[11]:

'rmse'

[9]:

optimizer = model.optimize_hyperparameters(

data=data,

algorithm="random",

num_iterations=100,

process_results=False,

refit=False,

)

Iteration No. Validation Score

total number of iterations: 100

0 17828.95533 17828.95533

1 16986.79110 16986.79110

2 22394144395.06774 22394144395.06774

3 199641.76044 199641.76044

WARNING:tensorflow:5 out of the last 9 calls to <function Model.make_predict_function.<locals>.predict_function at 0x000001BE25C933A0> triggered tf.function retracing. Tracing is expensive and the excessive number of tracings could be due to (1) creating @tf.function repeatedly in a loop, (2) passing tensors with different shapes, (3) passing Python objects instead of tensors. For (1), please define your @tf.function outside of the loop. For (2), @tf.function has experimental_relax_shapes=True option that relaxes argument shapes that can avoid unnecessary retracing. For (3), please refer to https://www.tensorflow.org/guide/function#controlling_retracing and https://www.tensorflow.org/api_docs/python/tf/function for more details.

4 133568722174052.40625 133568722174052.40625

WARNING:tensorflow:6 out of the last 11 calls to <function Model.make_predict_function.<locals>.predict_function at 0x000001BE23C755E0> triggered tf.function retracing. Tracing is expensive and the excessive number of tracings could be due to (1) creating @tf.function repeatedly in a loop, (2) passing tensors with different shapes, (3) passing Python objects instead of tensors. For (1), please define your @tf.function outside of the loop. For (2), @tf.function has experimental_relax_shapes=True option that relaxes argument shapes that can avoid unnecessary retracing. For (3), please refer to https://www.tensorflow.org/guide/function#controlling_retracing and https://www.tensorflow.org/api_docs/python/tf/function for more details.

5 18900.29079 18900.29079

6 42857348.18667 42857348.18667

7 18069.27824 18069.27824

8 11462458.58969 11462458.58969

9 18197.39146 18197.39146

10 401327.35925 401327.35925

11 100104.81291 100104.81291

12 23519115.01190 23519115.01190

13 23852.58460 23852.58460

14 519146.96604 519146.96604

15 23264.56258 23264.56258

16 212401.18056 212401.18056

17 18527.26423 18527.26423

18 18936.51034 18936.51034

19 18834.26070 18834.26070

20 18530.68979 18530.68979

21 179063.19349 179063.19349

22 3349561.75799 3349561.75799

23 74816.27374 74816.27374

24 167132767789469.25000 167132767789469.25000

25 9830938.86523 9830938.86523

26 2494690.56442 2494690.56442

27 18014.62606 18014.62606

28 18005.91192 18005.91192

29 12194428736.65494 12194428736.65494

30 242567.99565 242567.99565

31 298409.99104 298409.99104

32 15335.86228 15335.86228

33 585114774.40532 585114774.40532

34 15287.02718 15287.02718

35 3962250.86479 3962250.86479

36 183286.06118 183286.06118

37 16189.17021 16189.17021

38 509555325.81110 509555325.81110

39 20937.36411 20937.36411

40 1458506.16487 1458506.16487

41 18684.64696 18684.64696

42 17838.87620 17838.87620

43 18994.62998 18994.62998

44 15191.29488 15191.29488

45 17272896.59177 17272896.59177

46 18325.84361 18325.84361

47 18382.89031 18382.89031

48 16192.88436 16192.88436

49 18928.28233 18928.28233

50 24434.65343 24434.65343

51 71488.79498 71488.79498

52 1617668.51716 1617668.51716

53 49468.08005 49468.08005

54 17548.45908 17548.45908

55 18895.16848 18895.16848

56 19313.61551 19313.61551

57 114076.08633 114076.08633

58 16953.63371 16953.63371

59 3807648.40757 3807648.40757

60 18441.70057 18441.70057

61 26924.45253 26924.45253

62 14782.12163 14782.12163

63 168957104281.68372 168957104281.68372

64 17489.03382 17489.03382

65 18923.45508 18923.45508

66 54742.19433 54742.19433

67 17207.94328 17207.94328

68 17252.83884 17252.83884

69 59669.86509 59669.86509

70 24924.40154 24924.40154

71 17763.49695 17763.49695

72 18700.59593 18700.59593

73 16740.31780 16740.31780

74 15025.22412 15025.22412

75 183939092.75044 183939092.75044

76 18424.76855 18424.76855

77 33519.16180 33519.16180

78 131365.70999 131365.70999

79 18912.41286 18912.41286

80 58569.28888 58569.28888

81 7053212.09685 7053212.09685

82 7990078.49670 7990078.49670

83 60182.69997 60182.69997

84 16213.64462 16213.64462

85 18141.43721 18141.43721

86 17795.98427 17795.98427

87 14227.13520 14227.13520

88 17443.83077 17443.83077

89 14728.05486 14728.05486

90 769534059.71990 769534059.71990

91 188802.89625 188802.89625

92 43411.79637 43411.79637

93 1731196.70181 1731196.70181

94 19788.61895 19788.61895

95 18811.76653 18811.76653

96 34696.08208 34696.08208

97 16019.68090 16019.68090

98 5979226.75458 5979226.75458

99 71153273.03211 71153273.03211

0 is not equal to 100 so can not perform ranking

[10]:

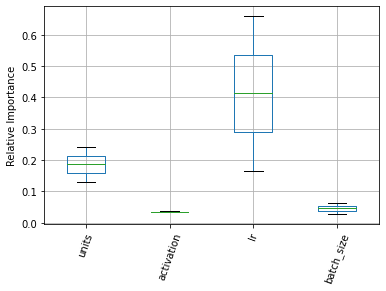

_ = optimizer.plot_importance()

[11]:

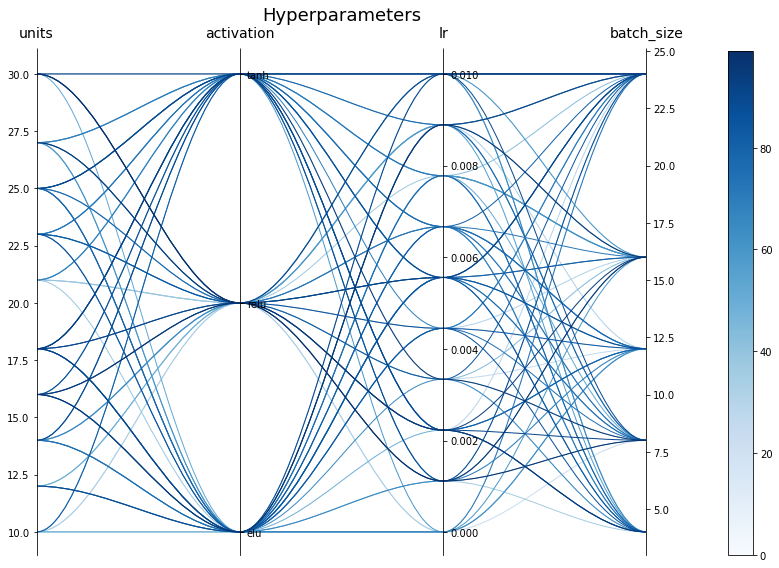

optimizer.best_paras()

[11]:

{'units': 25, 'activation': 'tanh', 'lr': 0.01, 'batch_size': 8}

[12]:

optimizer.best_iter()

[12]:

87

[13]:

optimizer._plot_parallel_coords(figsize=(12, 8))

[14]:

model = Model(

model = {"layers": {

"Input": {"shape": (lookback, len(input_features))},

"LSTM": {"units": optimizer.best_paras()['units'],

"activation": optimizer.best_paras()['activation']},

"Dense": 1

}},

lr=optimizer.best_paras()['lr'],

batch_size=optimizer.best_paras()['batch_size'],

epochs=1000,

ts_args={"lookback": lookback},

input_features=input_features,

output_features=output_features,

x_transformation="zscore",

y_transformation={"method": "log", "replace_zeros": True, "treat_negatives": True},

)

building DL model for

regression problem using Model

Model: "model"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

Input (InputLayer) [(None, 14, 8)] 0

LSTM (LSTM) (None, 25) 3400

Dense (Dense) (None, 1) 26

=================================================================

Total params: 3,426

Trainable params: 3,426

Non-trainable params: 0

_________________________________________________________________

[15]:

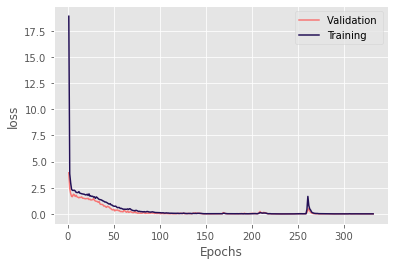

_ = model.fit_on_all_training_data(data=data, verbose=0)

***** Training *****

input_x shape: (136, 14, 8)

target shape: (136, 1)

***** Validation *****

input_x shape: (35, 14, 8)

target shape: (35, 1)

***** Validation *****

input_x shape: (35, 14, 8)

target shape: (35, 1)

********** Successfully loaded weights from weights_237_0.00062.hdf5 file **********

***** Test *****

input_x shape: (74, 14, 8)

target shape: (74, 1)

argument test is deprecated and will be removed in future. Please

use 'predict_on_test_data' method instead.

3/3 [==============================] - 0s 2ms/step

{'r2': 0.0013408165145923254, 'nse': -0.028649883432676937, 'rmse': 27242.579613538805}

[ ]: